AI-powered browsers are changing how people search, navigate, and interact with the internet. Platforms like Perplexity’s Comet and Brave’s Leo do much more than show webpages; they analyze content, perform tasks, and act on behalf of the user. This new way of browsing offers incredible convenience, but it also brings serious security risks that traditional browsers never faced.

As users increasingly rely on AI to handle their browsing actions, cybersecurity experts warn of a new set of threats. These include invisible commands, prompt injection, and agent manipulation. Unlike traditional malware or phishing attacks, these threats do not target users directly but instead focus on the AI assistant that operates for them.

This article looks at how AI browsers function, the risks they create, examples of real vulnerabilities, and why top security experts believe we are entering a time when language itself can become a weapon.

The New AI Browser Experience: Convenience Reimagined

AI That Reads, Interprets, and Acts

Unlike traditional browsers that simply load HTML, AI browsers analyze page content and make decisions based on user prompts. Andy Bennett, CISO at Apollo Information Systems, explains the appeal:

“The ability to quickly gather and summarize available information without hours of clicking and reading is incredibly valuable.”

AI-driven browsers can:

- Summarize entire long articles

- Extract key data instantly

- Compare information across many pages

- Highlight insights that users might overlook

- Automate tasks that usually take time

This is not just browsing; it is assisted research and action on a larger scale.

From Information to Action: The Rise of “Agentic Browsing”

Researchers at Brave point out the significant change:

“Instead of just asking ‘Summarize this page,’ you can command, ‘Book me a flight to London.’ The AI doesn’t just read — it completes transactions on its own.”

This “agentic browsing” enables an AI assistant to:

-

- Click buttons

- Fill forms

- Complete purchases

- Manage multiple tabs

- Follow instructions

- Handle your logged-in accounts

In other words, the browser becomes an active agent rather than just a tool.

But as Brave warns, this capability brings a serious risk:

“Great power comes with great risk.”

The Dark Side of AI Browsing: New Attack Surfaces

AI-driven autonomy has its downsides. The more users trust AI with their accounts, data, and sessions, the more attackers can take advantage of the AI’s interpretive actions.

1. Hidden Instructions: The Invisible Threat

One of the most alarming vulnerabilities found in AI browsers involves hidden or invisible instructions in webpage content.

Brave researchers discovered that Perplexity’s Comet browser sent page content straight into its language model without separating user commands from potentially harmful text.

This means attackers can:

- Embed malicious prompts in comments

- Hide instructions in reviews

- Include invisible text using CSS

- Abuse metadata or alt-text

- Trick the AI into executing harmful commands

The result? The AI may execute dangerous instructions thinking they are legitimate requests from the user.

Brave explains:

“Attackers can embed indirect prompt injection payloads that the AI will run as commands.”

This is not a theory; it has been demonstrated in practice.

Why AI Is Especially Vulnerable

Lionel Litty, CISO at Menlo Security, explains:

“Prompt injection is especially risky for AI browsers because web content is inherently untrusted and comes from various sources.”

Unlike emails or PDFs, web pages often contain:

- Ads

- Reviews

- User comments

- Third-party scripts

- Embedded widgets

Any of these could contain harmful instructions aimed at the AI.

2. Why Traditional Security Tools Fail to Protect AI Browsers

Security systems designed for traditional browsers cannot handle AI-driven interactions.

Litty notes:

“Network tools are not equipped to deal with prompt injection because they lack context about the browsing session.”

AI browsers need security layers that understand:

- The user’s intent

- The AI’s reasoning

- The complete context of the page

- The difference between content and commands

These requirements go beyond what current web-security tools can manage.

3. Amazon vs. Perplexity: Security Concerns Turn Into Corporate Conflict

Amazon’s Cease-and-Desist Letter

Amazon recently instructed Perplexity to cut off Comet’s access to the Amazon Store, citing significant security issues.

According to Amazon:

- Perplexity’s terms allow it to collect sensitive user data.

- Comet bypassed Amazon’s bot-detection systems.

- The agent accesses accounts without proper identification.

- Comet is at risk of prompt injection and phishing.

- Its actions interfere with Amazon’s own security measures.

Amazon described Perplexity’s actions as “particularly troubling.”

Perplexity’s Counterargument

Perplexity countered, claiming that Amazon’s real concern is financial, not security-related.

The main argument:

- AI agents skip sponsored listings.

- They avoid upselling and manipulative pricing.

- They choose the cheapest or most relevant option.

- This threatens Amazon’s income from ads and product placement.

Perplexity likened Amazon’s restrictions to:

“A store forcing customers to use a personal shopper who works for the store — not for the customer.”

This argument highlights the ongoing conflict between AI-powered browsing and conventional web business models.

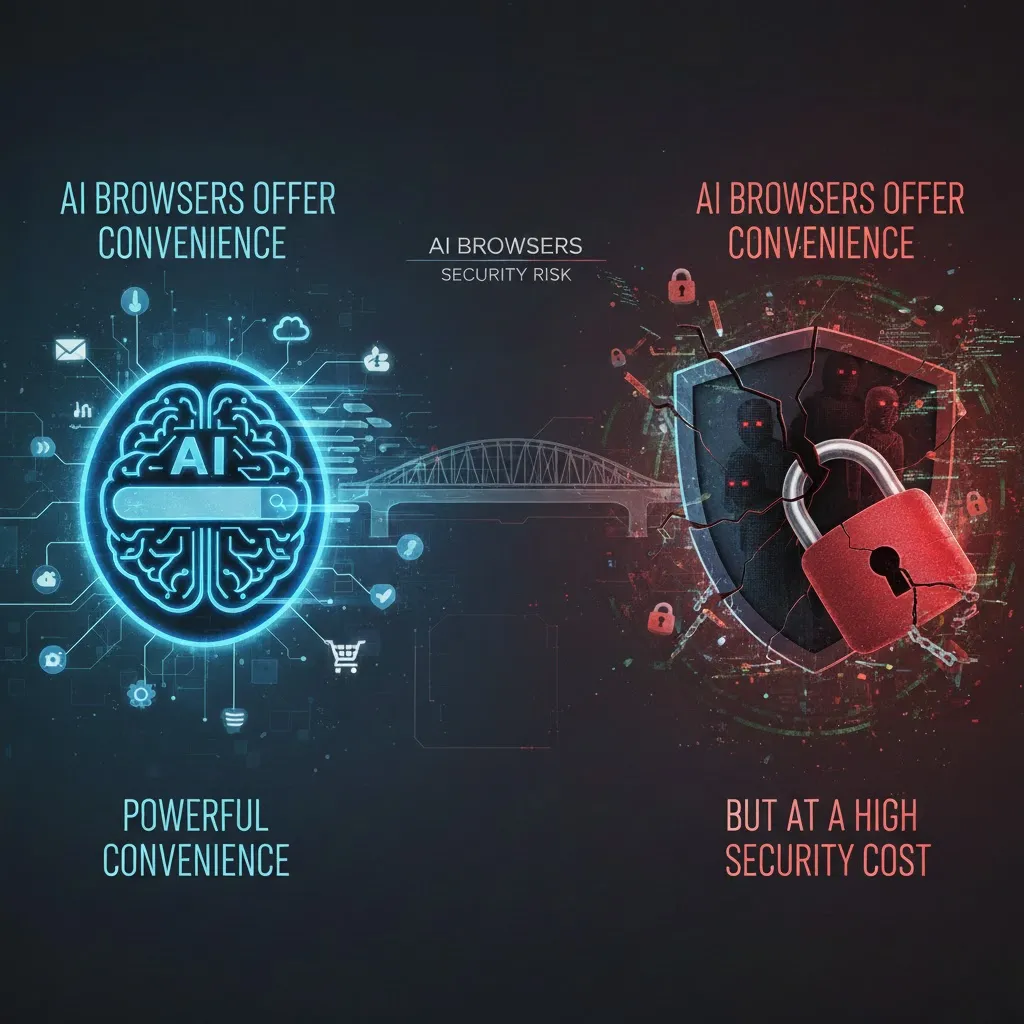

AI Browsers Are Interpreters — and That’s the Security Weak Point

Traditional browsers simply load a website; they do not make decisions.

AI browsers, however, interpret content, and this interpretation layer is what attackers exploit.

Dylan Dewdney, CEO of Kuvi.ai, explains:

“A regular browser might load a malicious site, but an AI browser can be tricked or linguistically coerced into taking actions you didn’t authorize.”

This includes:

- Accessing accounts

- Sending private information

- Executing tasks

- Making purchases

- Clicking harmful links

The AI becomes the attacker’s remote-controlled assistant.

The Most Dangerous Element: Autonomous Actions

Dan Pinto, CEO of Fingerprint, warns that attacks through AI browsers can be much more severe:

“The AI assistant may take action on your behalf — like filling out forms or sending personal data — without your knowledge.”

Because the AI conducts actions faster than a human can supervise, consequences happen almost instantly.

This makes AI browsers vulnerable to:

- Automated phishing

- Credential theft

- Data exfiltration

- Account takeovers

- Transaction manipulation

- Workflow hijacking

Jon Knisley of Abbyy calls it a “one-two punch”:

“The autonomous nature of AI browsers and their deep integration with user resources expand their impact radius.”

In other words, one compromised agent can lead to multiple compromised systems.

AI Browsers Collapse the Gap Between Reading and Acting

Dewdney frames the risk clearly:

“AI browsing collapses the gap between reading and acting.”

In traditional browsing, malicious content still requires a user action — clicking, typing, or entering data.

With AI browsers:

- The AI acts automatically.

- It can be tricked through hidden prompts.

- There is no “human friction” to stop a bad decision.

Dewdney adds:

“With agents, there’s speed and obedience.”

This is what makes AI-enabled browsing fundamentally more dangerous.

Password Handling Becomes a High-Stakes Risk

Nick Muy, CISO at Scrut Automation, warns:

“Giving an agentic browser access to passwords and credentials makes it inherently dangerous.”

He advises:

- Never store passwords directly in an AI browser.

- Use third-party vaults like 1Password.

- Limit the agent’s access to session data.

- Avoid giving the AI permission to act across multiple accounts.

Even then, he notes:

“There’s still a lot of risk with giving the browser access to anything.”

In short, convenience comes at the cost of control.

The First Real Wave of AI Browser Attacks Has Begun

Security researchers now see early attacks focused on targeting AI agents — not humans.

Dan Pinto explains:

“We’re observing the first wave of attacks shaped entirely around how AI assistants see the web, not how humans do.”

This means cybersecurity must adapt to:

- Detect agent anomalies.

- Monitor unusual automation patterns.

- Identify misaligned AI behavior.

- Catch unintended actions in real-time.

Traditional human-focused security frameworks are not enough.

A New Era: Language Becomes a Weapon

Dewdney makes a bold but accurate statement:

“We’re entering an era where language is an attack vector.”

AI systems respond to language-based commands. Therefore, malicious phrasing, hidden text, manipulated instructions, and embedded prompts become effective hacking tools.

Security models designed for humans cannot protect systems that read, summarize, infer, and act.

What Future Security Must Include

Experts suggest three innovations:

- Cryptographic verification: Making sure content is tamper-proof and verifiable.

- Agent sandboxing: Limiting what the AI can do and where it can act.

- Decentralized identity frameworks: Reducing universal access and restricting privileges.

These solutions are not yet widely available.

The Bottom Line: AI Browsers Are Powerful — and One Click Away From Chaos

Dewdney compares today’s AI browsers to the early days of email:

“Treat AI browsers the way early internet users treated email attachments — powerful, convenient, and one careless click away from chaos.”

AI browsers can significantly change how people access information and complete tasks online. But they also introduce new risks, including:

- Invisible harmful prompts

- Autonomous harmful actions

- Credential exposure

- Manipulated transactions

- Exploited agent behavior

- Large-scale data theft

As agentic browsing becomes common, cybersecurity must evolve quickly — or even faster.

Until then, users should be extremely cautious.